Most SaaS teams collect customer feedback the wrong way. They send a quarterly survey blast, get a 3–5% response rate, and wonder why the data never drives a decision. The problem isn't that customers won't give feedback — it's that the method, timing, and question don't match the moment.

Different stages of the customer lifecycle call for entirely different feedback methods. An NPS survey sent on day 2 of onboarding is noise. The same survey at day 30 is signal. An exit survey embedded in the cancellation flow gets a 40% response rate. The same survey sent by email a week later gets 8%.

This guide covers the 9 most effective ways to collect customer feedback — with response rate benchmarks, the right timing for each, and what to do with the data once you have it.

Quick comparison: all 9 methods

Before going deep on each method, here's how they compare at a glance:

In-product NPS surveys — Response rate: 20–40% · Best for: active users · Timing: triggered by behavior or quarterly

Post-interaction CSAT — Response rate: 15–35% · Best for: specific touchpoints · Timing: within 5 minutes of event

CES surveys — Response rate: 15–30% · Best for: friction diagnosis · Timing: after high-effort tasks

Exit surveys — Response rate: 30–60% · Best for: churn analysis · Timing: at cancellation trigger

PMF survey — Response rate: 20–35% · Best for: product-market fit validation · Timing: 30+ days of active use

Email NPS — Response rate: 5–20% · Best for: inactive or churned users · Timing: quarterly or post-churn

Website feedback widget — Response rate: 1–5% of visitors · Best for: anonymous visitors · Timing: always-on

User interviews — Response rate: N/A (scheduled) · Best for: qualitative discovery · Timing: monthly, by segment

Link surveys — Response rate: 2–10% · Best for: external audiences · Timing: ad hoc

1. In-product NPS surveys

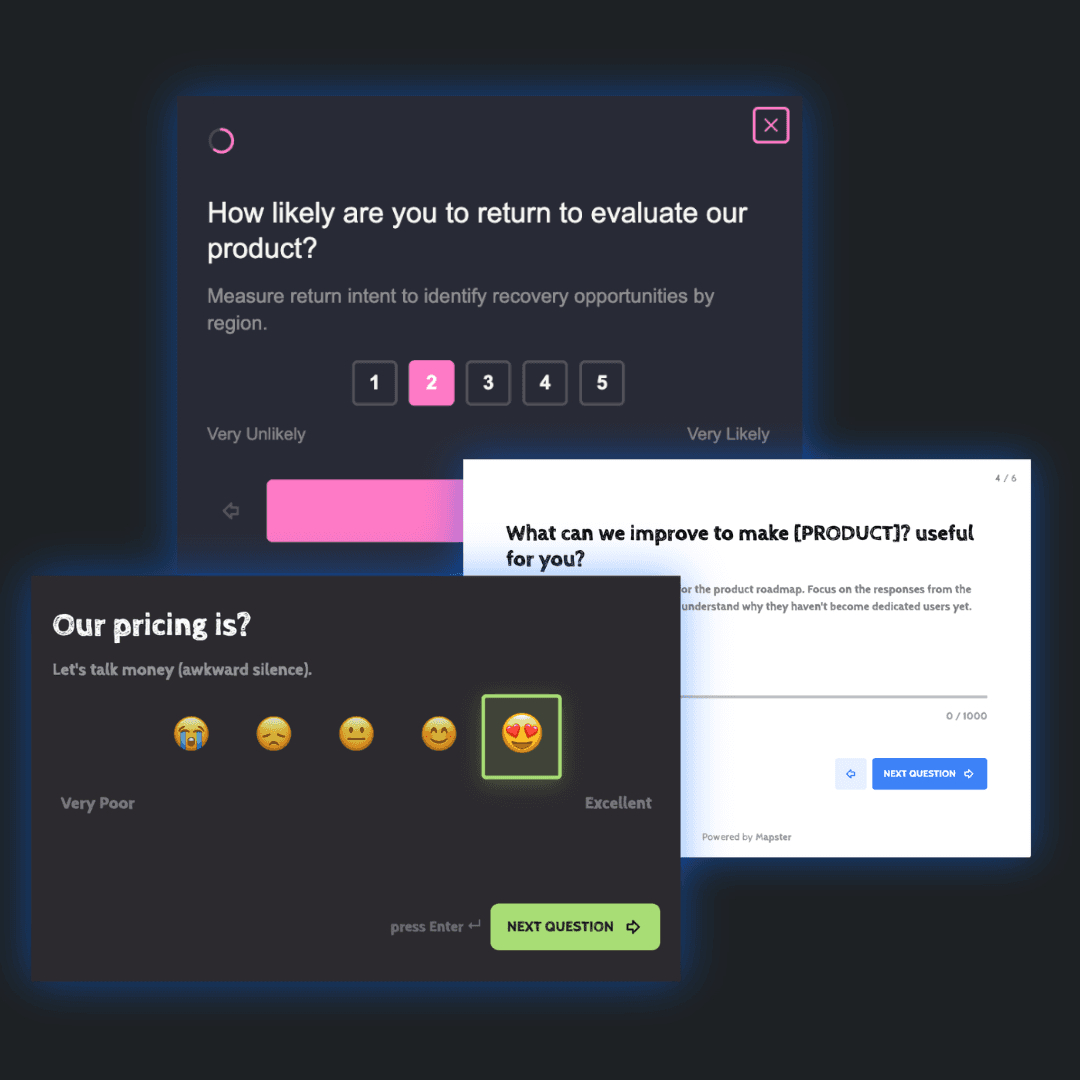

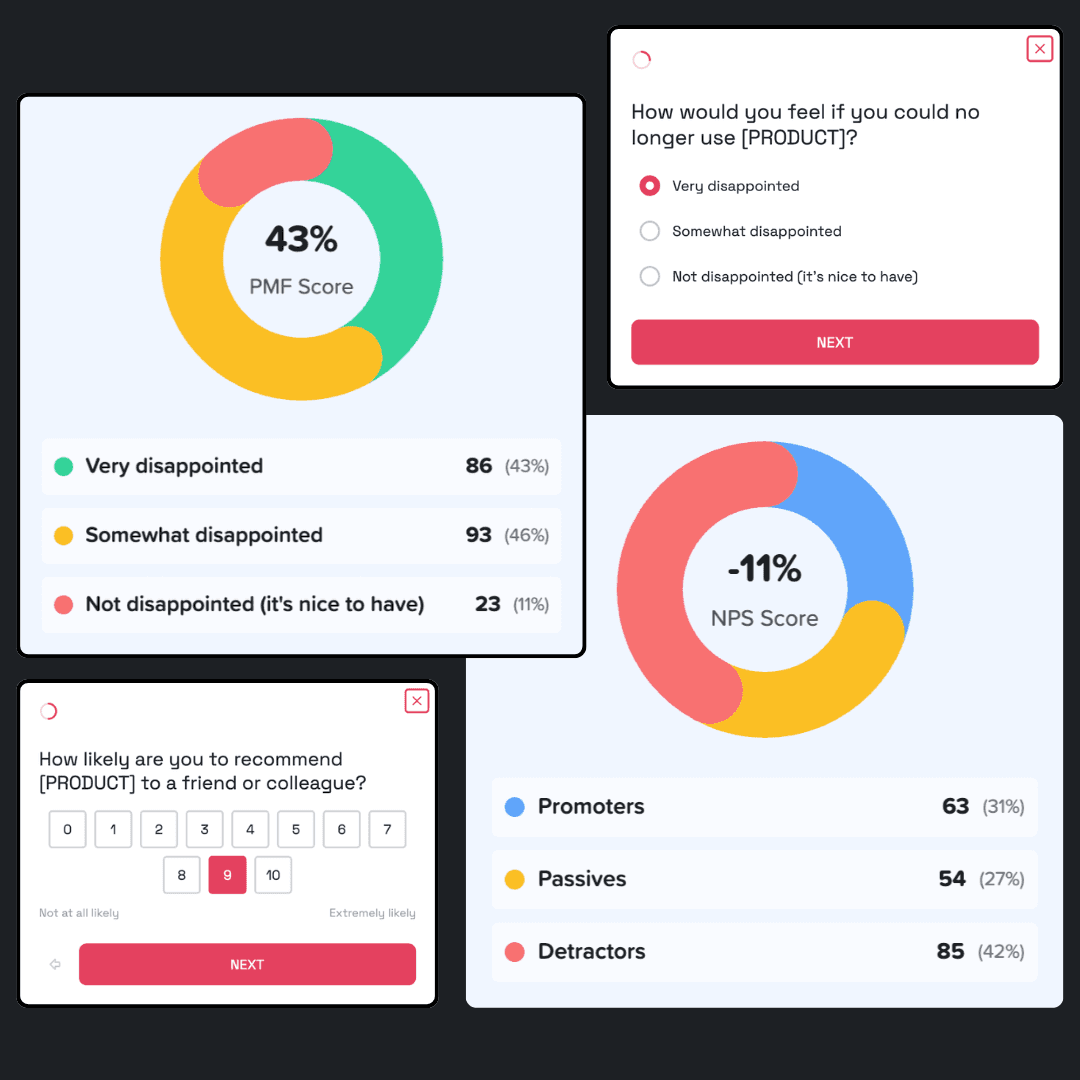

In-product NPS is the highest-leverage feedback method for most SaaS teams. It asks "How likely are you to recommend us to a friend or colleague?" (0–10) inside the product, triggered after a meaningful interaction — not on a schedule, not by email, but at the moment when the product experience is fresh.

The reason in-product outperforms email NPS by 2–4x on response rate is simple: the user is already in your product, they've just done something meaningful, and a one-question survey takes 8 seconds. No inbox competition, no context switching, no forgetting what the experience felt like.

When to send it

Quarterly relationship survey: Send to all active users every 90 days. This is your baseline — the number you track over time to measure whether loyalty is improving or eroding.

Day 30 after signup: Users who reach day 30 have survived onboarding and experienced your core value. This is the best moment to measure how well your product delivered on its initial promise.

60 days before renewal: For annual contracts, an NPS sent 60 days before renewal gives you time to recover detractors before the renewal conversation starts.

After a major product release: A targeted NPS to users who've adopted the new feature tells you whether it moved the needle on loyalty.

The follow-up question matters more than the score

The NPS number is a lagging indicator. The open-ended follow-up — "What's the main reason for your score?" — is where the actionable data lives. Every detractor (0–6) response is a churn signal with a named root cause. Every promoter (9–10) response names a value prop you can use in sales and marketing.

What to do with the data

Detractors (0–6): Follow up personally within 48 hours. Name the specific problem they mentioned. Give a concrete next step and a deadline. A personal response to a detractor can recover the relationship — a template response accelerates churn.

Passives (7–8): These users are satisfied but not loyal. Identify the gap between where they are and Promoter territory. The open-ended response usually names it. Address it proactively.

Promoters (9–10): Activate them. Ask for a G2 or Capterra review. Request a referral. Invite them to a case study. Warm advocacy from a satisfied customer converts far better than cold outreach.

Segment your NPS by user attributes — plan tier, role, tenure, company size. An overall NPS of 42 can hide a score of 8 among your highest-revenue enterprise users. The aggregate number lies; the segment breakdown tells the truth.

2. Post-interaction CSAT surveys

Customer Satisfaction Score (CSAT) measures satisfaction with a specific touchpoint — not your product overall, but a single interaction: a support ticket, an onboarding call, the first use of a new feature, a billing change. It asks "How satisfied were you with [interaction]?" on a 1–5 scale.

CSAT is the best feedback method for transactional moments because it's tied to a specific event the user can still recall clearly. Send it within 5 minutes of resolution — the further you get from the event, the less accurate the rating.

When to send it

After every support interaction: Send within 5 minutes of ticket closure. Support CSAT is a direct churn predictor — users who rate support 1–2 are significantly more likely to cancel within 90 days.

After onboarding completes: Catch early friction before it compounds into churn. A low onboarding CSAT at day 7 is an intervention opportunity; discovering it at day 90 is too late.

After first use of a new feature: Measures whether the feature delivered on its promise — more reliable than tracking adoption metrics alone.

What to do with the data

For individual low scores (1–2): respond personally within 24 hours and solve the original problem completely. For aggregate low scores on a specific touchpoint: investigate whether it's systemic — a pattern of 2/5 support CSAT on a specific issue type means a broken process, not a bad interaction.

Key metric: Track % of responses that score 4–5. For SaaS support, a healthy CSAT is 85–90%+. Below 80% signals a systemic problem worth investigating.

3. CES surveys after high-effort tasks

Customer Effort Score (CES) asks "How easy was it to [complete task]?" on a 1–7 scale. Gartner research shows CES is the strongest predictor of churn among the three core feedback metrics — more than satisfaction, more than loyalty. Users don't expect delight; they expect ease. Make something hard enough and they leave, even if they like the product.

CES is not a relationship metric — it's a friction diagnostic. Use it specifically on the interactions where users are most likely to struggle, then use the data to eliminate that friction systematically.

When to send it

After support ticket resolution: "How easy was it to get your issue resolved?" If the answer is "very difficult," the problem isn't just the agent — it's the process.

After onboarding setup: "How easy was it to get [Product] set up?" Onboarding friction is the #1 driver of early churn for SaaS products.

After data import or export: High-complexity tasks that users need to complete before they can get value. High effort here delays time-to-value.

After billing changes: Plan upgrades, downgrades, seat additions. High effort here erodes trust even when the interaction goes fine.

What to do with the data

CES data answers "where" — it identifies which workflows have friction. The open-ended follow-up answers "what" — it names the specific step that was hard. Prioritize eliminating friction in your top 3 highest-CES workflows before adding new features. Friction reduction has higher ROI per engineering hour than feature addition for most mid-stage SaaS products.

4. Exit surveys at cancellation

Exit surveys are the most underused high-value feedback method in SaaS. When a user clicks "Cancel my subscription," you have one moment to ask why — and users at this stage are motivated to be honest in a way they rarely are during active use.

The right format: a multiple-choice list of churn reasons (max 6 options) plus an optional open-text field. Don't use only open text — you'll get vague answers. Don't use only multiple choice — you'll miss the nuance. The combination gives you quantifiable churn attribution and qualitative context.

The churn reason list that works

Too expensive — price-to-value mismatch

Missing a feature I need — feature gap

Switching to a different tool — competitive loss

No longer need it — use case change or job change

Too hard to use — usability or friction

Other — always include this as optional open text

What to do with the data

Aggregate exit survey results by churn reason monthly. If "Too expensive" represents 40% of cancellations, you have a pricing problem — not a feature problem. If "Missing a feature I need" is 35%, cross-reference with your product backlog to find the highest-churn-impact missing feature.

For high-value churned users: have a founder or account manager follow up personally within 48 hours. Ask one question: "What would it have taken to keep you?" A small percentage will return. All of them give you information worth more than the subscription revenue.

5. PMF survey (for early-stage teams)

The Product-Market Fit survey asks one question: "How would you feel if you could no longer use this product?" with three choices: Very disappointed / Somewhat disappointed / Not disappointed. If 40% or more answer "Very disappointed," you have product-market fit. Below 40% and you need to iterate before investing in growth.

The two follow-up questions that complete the picture: "What would you use instead?" (reveals your real competitive set) and "What type of person would most benefit from this product?" (lets users define your ICP for you — the Superhuman method).

When to run it

First time: When you have at least 40 active users who have been using the product for 30+ days. Fewer than 40 responses and the percentage isn't statistically meaningful.

Ongoing: Every quarter for early-stage teams. After every major product change. When you're considering a pivot. The trend matters as much as the absolute number — moving from 28% to 36% over two quarters is strong signal you're heading in the right direction.

What to do with the data

If you're above 40%: identify your 'very disappointed' users and figure out what they have in common. These are your ICP. Double down on acquiring more of them.

If you're below 40%: don't scale. Read the 'What would you use instead?' responses — they tell you who you're actually competing with. Read what your 'very disappointed' users love most — they tell you which value prop is real.

6. Email NPS surveys

Email NPS is the right channel when you can't reach users inside the product — inactive users, churned users, or audiences outside your app. It has lower response rates than in-product (5–20% vs 20–40%), but it's the only option for users who aren't logging in.

The single most important thing about email NPS: embed the 0–10 scale directly in the email body. Don't link to an external page. Every additional click required cuts your response rate by 30–50%. The best implementations let users click their score in the email itself — that click registers the response and opens a follow-up page for their qualitative comment.

When to use it

Quarterly for inactive users: Users who haven't logged in for 30+ days can't be surveyed in-product. Email NPS catches them before they silently churn.

7–30 days after cancellation: Gives users time to reflect on the full experience and often surfaces insight the exit survey missed.

For competitive research: Emailing users of competitor products is legal and often done openly in communities where teams discuss their tooling stack.

7. Website feedback widget

A persistent feedback button on your marketing site and docs captures unsolicited feedback from anonymous visitors. It's always-on: no scheduling, no triggers, no campaign management needed.

Response rate is low (1–5% of page visitors), but signal quality is high. Users who seek out a feedback button are motivated — they've hit something frustrating enough to say something. That motivation bias is useful: you're capturing the friction strong enough to overcome the activation energy of clicking a button.

Where to place it

Pricing page: Captures purchase objections from visitors who didn't convert. 'What stopped you from signing up today?' unlocks your biggest conversion blockers.

Documentation: Captures confusion before it becomes a support ticket. A simple thumbs up/down on every docs page gives you usability signal at scale.

Onboarding flow: A feedback button during setup captures the friction new users hit before they even reach your in-product surveys.

8. User interviews

User interviews give you the qualitative depth that no survey can match. In a 30–45 minute conversation, you can follow up on any answer, probe for root causes, observe hesitation in real time, and discover problems you didn't know to ask about. Surveys tell you what. Interviews tell you why.

The tradeoff is scale. You can run 100 NPS surveys in a week; you can do 5 quality interviews. That's not a reason to skip interviews — it's a reason to be deliberate about who you talk to and what you're trying to learn.

Who to interview

New signups (days 3–7): What brought them here, what they were hoping to accomplish, what their first impression was. Best source of positioning and onboarding insight.

Power users: The users who log in most often and use the most features. They know your product better than anyone and have strong opinions about what's missing.

Recently churned users: The most valuable and hardest to schedule. A 20-minute call with someone who just cancelled tells you more than 50 exit survey responses. Offer a gift card — conversion rate on the ask goes up significantly.

Prospects who didn't convert: What stopped them? This data improves sales and onboarding more than any A/B test.

The questions that unlock the most useful insights

"What were you trying to accomplish when you first looked for a product like ours?" — Reveals the real trigger for buying.

"Walk me through what happened the first time you used [feature]." — Let them narrate. Don't interrupt.

"What almost stopped you from signing up?" — Surfaces objections your landing page hasn't addressed.

"If this product disappeared tomorrow, what would you do?" — Names your real competitive alternatives.

How many interviews do you need? Five per user segment. By the fifth, you're hearing repeated themes — that's when you have enough signal to act.

9. Link surveys for external audiences

A link survey is distributed via URL — shared in Slack communities, emailed to a cold list, posted on social media, or embedded in a newsletter. It's the right method when you need to reach people outside your product: non-customers, prospective users, or audiences in a market you're evaluating.

The main risk: self-selection bias. Who clicks the link shapes the results. Distribute across multiple channels and acknowledge the bias when reading results.

When to use them

Pre-product market research: Before you build, validate that the problem exists. 'How do you currently solve [X]?' distributed to your target audience.

Competitive research: Survey users of competitor products. 'What do you like most / least about [Competitor]?' is legal and often done openly in product communities.

Content research: 'What's your biggest challenge with [topic]?' distributed to a relevant community. Surfaces blog and product ideas directly from your audience.

Building a feedback stack by stage

Early-stage: under 100 active users

Qualitative depth beats quantitative breadth at this stage. You need to understand why things are happening, not just measure that they are.

Start with: User interviews (method 8) — 5 per segment. PMF survey (method 5) — once you hit 40 active users. Exit survey (method 4) — from day one, every cancellation.

Don't run NPS yet — with fewer than 40 responses, percentage-based metrics aren't meaningful.

Growth-stage: 100–1,000 active users

Add: In-product NPS (method 1) quarterly. Post-support CSAT (method 2) after every ticket. CES after onboarding (method 3).

Segment your NPS by plan tier immediately — the aggregate number will mislead you if you have a mix of free and paid users.

Scale-stage: 1,000+ active users

Add: Website feedback widget (method 7) on pricing and docs. Email NPS for inactive users (method 6). Ongoing monthly user interviews per segment.

At scale, assign ownership: support owns CSAT, product owns NPS and PMF, CS owns renewal-window NPS, growth owns exit surveys.

The step most teams skip: closing the feedback loop

Collecting feedback is the easy part. Telling customers what changed because of their feedback is what builds loyalty. Most teams collect and analyze. Almost none close the loop — and that's the step that converts detractors into promoters.

With detractors: Tell them what you fixed. 'You mentioned the export was broken — we shipped a fix. Would you be willing to take another look?' This converts detractors into passives at a rate no marketing campaign matches.

With feature requesters: When you ship something a user asked for, tell them. 'You mentioned needing CSV export 3 months ago — it's live.' This is the fastest path to a promoter.

Publicly: A monthly 'What we fixed based on your feedback' email to your user base demonstrates that you listen — and increases response rates in your next survey cycle.

Start with one, do it well

The worst feedback mistake isn't running the wrong method — it's running all nine before you have a team to act on any of them. Survey fatigue is real. Too many surveys with no visible outcome and response rates drop permanently.

Pick the method that matches your biggest current unknown. Don't know if you have product-market fit: run the PMF survey. Losing users and don't know why: add an exit survey today. NPS is low but you don't know which segment: segment your next run by plan tier.

One method, executed well, with a clear action attached to every response, is worth more than nine methods running in parallel with no one reading the data.

Product Market Fit Playbook

This Playbook will explain you the concept, how to measure it and the exact strategies used by Slack, Intercom, AirBnB, and more, to achieve it.

42%of startup failures are due to poor Product Market Fit, measure yours.